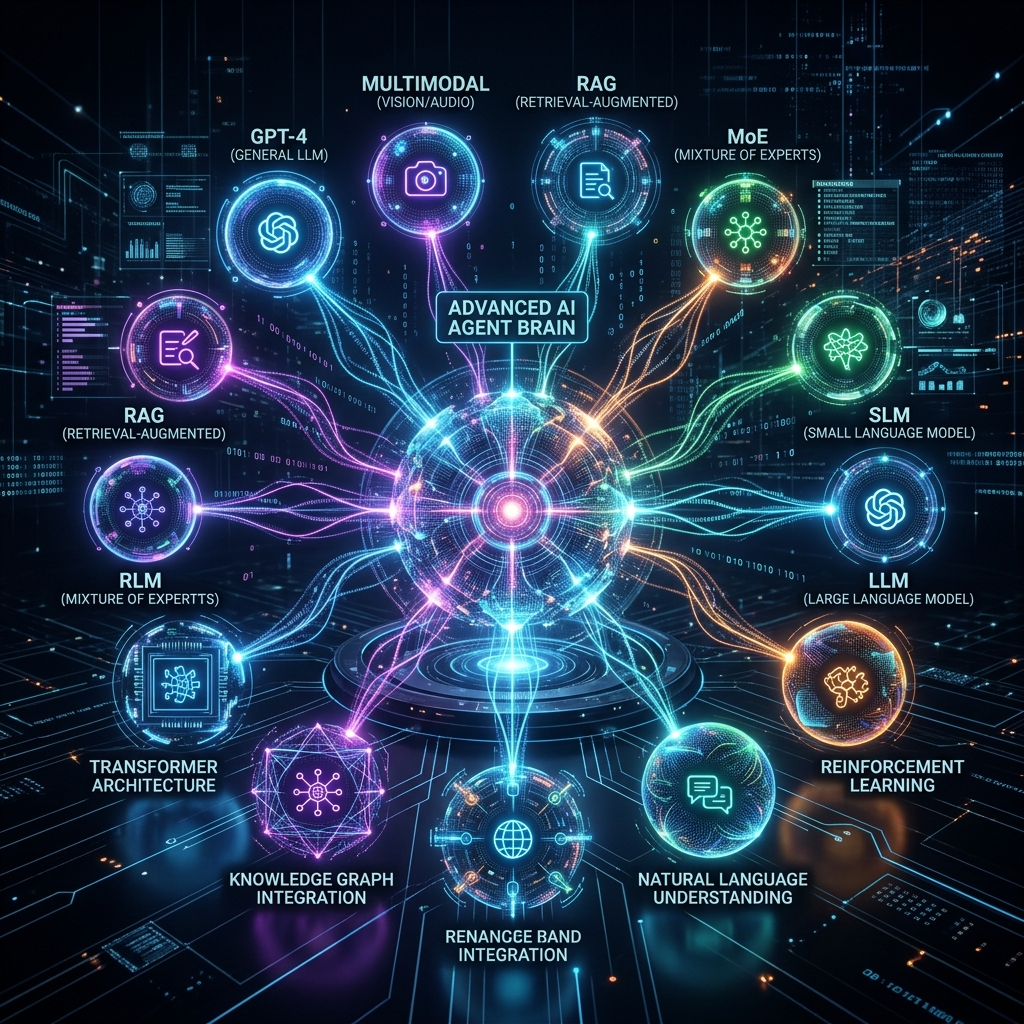

Artificial Intelligence has moved far beyond simple chatbots. In 2026, AI agents are autonomous systems capable of reasoning, planning, retrieving knowledge, interacting with tools, and executing tasks. These systems rely on multiple types of Language Models (LMs) working together rather than a single AI model.

Modern AI architectures combine specialized models designed for reasoning, perception, knowledge retrieval, and task execution. This modular structure allows AI agents to operate more efficiently and solve complex problems across industries such as software development, healthcare, finance, and automation.

In this article, we explore 11 types of language models currently used in AI agents, including popular architectures like GPT, RAG, HRM, and multimodal models, along with GitHub resources for developers and researchers.

What Are Language Models in AI Agents?

Language models are deep learning systems trained on massive datasets to understand, generate, and reason with human language. Most modern models use transformer architectures, which allow AI to process context and relationships between words efficiently.

AI agents use these models to perform tasks such as:

- Natural language conversations

- Code generation

- Knowledge retrieval

- Data analysis

- Workflow automation

- Multimodal reasoning

Instead of relying on a single model, modern AI agents orchestrate multiple models, each responsible for a specialized capability.

11 Types of Language Models

1. GPT Models (Generative Pre-trained Transformers)

GPT models are among the most widely used language models in AI applications. These models are trained on massive datasets to generate coherent text, solve problems, and assist with coding, research, and content creation.

Key Features: Natural language understanding, text and code generation, conversational AI capabilities, context-aware responses.

GitHub Resources: GPT-2, GPT-3

2. Small Language Models (SLMs)

Small Language Models are optimized for efficiency and lower computing requirements. Unlike massive LLMs, these models can run locally on devices such as smartphones, laptops, and embedded systems.

Key Features: Lightweight architecture, faster processing, lower infrastructure costs.

GitHub Resource: Phi-2

3. Multimodal Language Models (MLLMs)

Multimodal models can process multiple types of input simultaneously, including text, images, audio, and video. These models allow AI agents to understand the world beyond text-based data.

Key Features: Cross-modal reasoning, image and text understanding, visual content analysis.

GitHub Resource: LLaVA

4. Vision-Language Models (VLMs)

Vision-Language Models focus specifically on combining visual perception with language understanding. These models are widely used in robotics and computer vision applications.

Key Features: Scene understanding, image-based reasoning, visual question answering.

GitHub Resource: CLIP

5. Retrieval-Augmented Generation (RAG)

RAG models enhance language models by retrieving information from external sources before generating responses. This allows AI systems to provide accurate and up-to-date answers.

Key Features: Access to external knowledge bases, reduced hallucinations, real-time information retrieval.

GitHub Resource: RAG

6. Large Reasoning Models (LRMs)

Large Reasoning Models are designed to solve complex logical problems and perform multi-step reasoning. These models often use chain-of-thought reasoning techniques to break down problems.

Key Features: Logical reasoning, multi-step problem solving, planning capabilities.

GitHub Resource: GPT-NeoX

7. Large Action Models (LAMs)

Large Action Models enable AI agents to interact with external tools, APIs, and software environments. These models are designed to execute tasks rather than simply generate text.

Key Features: Tool integration, workflow automation, autonomous task execution.

GitHub Resource: LangChain

8. Mixture-of-Experts Models (MoE)

Mixture-of-Experts models improve efficiency by routing tasks to specialized sub-networks called experts. This approach allows large models to scale without dramatically increasing computational cost.

Key Features: High scalability, specialized task routing, efficient resource usage.

GitHub Resource: T5X

9. HRM (Hierarchical Reasoning Models)

HRM models are designed for complex hierarchical reasoning tasks. These models break problems into smaller sub-tasks and solve them step by step.

Key Features: Multi-level reasoning, structured decision-making, task decomposition.

GitHub Resource: Tree of Thought

10. mHC Models (Multi-Head Cognitive Models)

mHC models use multiple reasoning heads to analyze problems from different perspectives simultaneously. This architecture improves accuracy and decision-making in complex environments.

Key Features: Parallel reasoning, multi-perspective analysis, improved decision accuracy.

GitHub Resource: minGPT

11. Hybrid AI Agent Models

Hybrid AI models combine multiple architectures—including LLMs, RAG systems, reasoning engines, and action models—to create fully autonomous AI agents.

Key Features: Multi-model orchestration, autonomous workflows, task planning and execution.

GitHub Resources: AutoGen, AutoGPT

Why AI Agents Use Multiple Language Models

Modern AI systems rely on model orchestration rather than a single model. A typical AI agent workflow looks like this:

- User query interpretation using GPT

- Knowledge retrieval through RAG

- Visual processing via multimodal models

- Logical planning using reasoning models

- Task execution through action models

This layered architecture improves accuracy, scalability, and reliability for enterprise AI systems.

Future of Language Models in AI Agents

By 2030, AI agents will likely combine reasoning engines, long-term memory systems, multimodal perception, and autonomous decision-making. These systems will function as fully autonomous digital workers capable of managing complex workflows with minimal human input.

Businesses adopting AI agents early will gain major advantages in automation, productivity, and innovation.

Conclusion

AI agents are powered by a diverse ecosystem of language models. Together, these models enable AI agents to understand language, retrieve knowledge, reason logically, perceive the environment, and execute complex tasks autonomously.